Be a part of the occasion trusted by enterprise leaders for almost twenty years. VB Rework brings collectively the individuals constructing actual enterprise AI technique. Learn more

Whereas giant language fashions (LLMs) have mastered textual content (and different modalities to some extent), they lack the bodily “frequent sense” to function in dynamic, real-world environments. This has restricted the deployment of AI in areas like manufacturing and logistics, the place understanding trigger and impact is crucial.

Meta’s newest mannequin, V-JEPA 2, takes a step towards bridging this hole by studying a world mannequin from video and bodily interactions.

V-JEPA 2 can assist create AI functions that require predicting outcomes and planning actions in unpredictable environments with many edge circumstances. This strategy can present a transparent path towards extra succesful robots and superior automation in bodily environments.

How a ‘world mannequin’ learns to plan

People develop bodily instinct early in life by observing their environment. In the event you see a ball thrown, you instinctively know its trajectory and might predict the place it’ll land. V-JEPA 2 learns the same “world mannequin,” which is an AI system’s inside simulation of how the bodily world operates.

The mannequin is constructed on three core capabilities which might be important for enterprise functions: understanding what is going on in a scene, predicting how the scene will change primarily based on an motion, and planning a sequence of actions to realize a selected purpose. As Meta states in its blog, its “long-term imaginative and prescient is that world fashions will allow AI brokers to plan and purpose within the bodily world.”

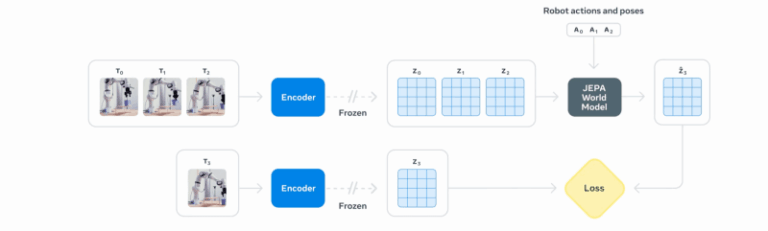

The mannequin’s structure, referred to as the Video Joint Embedding Predictive Structure (V-JEPA), consists of two key components. An “encoder” watches a video clip and condenses it right into a compact numerical abstract, referred to as an embedding. This embedding captures the important details about the objects and their relationships within the scene. A second element, the “predictor,” then takes this abstract and imagines how the scene will evolve, producing a prediction of what the following abstract will appear to be.

This structure is the newest evolution of the JEPA framework, which was first utilized to pictures with I-JEPA and now advances to video, demonstrating a constant strategy to constructing world fashions.

In contrast to generative AI fashions that attempt to predict the precise shade of each pixel in a future body — a computationally intensive process — V-JEPA 2 operates in an summary area. It focuses on predicting the high-level options of a scene, equivalent to an object’s place and trajectory, fairly than its texture or background particulars, making it much more environment friendly than different bigger fashions at simply 1.2 billion parameters

That interprets to decrease compute prices and makes it extra appropriate for deployment in real-world settings.

Studying from remark and motion

V-JEPA 2 is skilled in two levels. First, it builds its foundational understanding of physics by self-supervised learning, watching over a million hours of unlabeled web movies. By merely observing how objects transfer and work together, it develops a general-purpose world mannequin with none human steerage.

Within the second stage, this pre-trained mannequin is fine-tuned on a small, specialised dataset. By processing simply 62 hours of video displaying a robotic performing duties, together with the corresponding management instructions, V-JEPA 2 learns to attach particular actions to their bodily outcomes. This leads to a mannequin that may plan and management actions in the true world.

This two-stage coaching allows a crucial functionality for real-world automation: zero-shot robotic planning. A robotic powered by V-JEPA 2 may be deployed in a brand new surroundings and efficiently manipulate objects it has by no means encountered earlier than, while not having to be retrained for that particular setting.

It is a important advance over earlier fashions that required coaching knowledge from the precise robotic and surroundings the place they might function. The mannequin was skilled on an open-source dataset after which efficiently deployed on completely different robots in Meta’s labs.

For instance, to finish a process like choosing up an object, the robotic is given a purpose picture of the specified final result. It then makes use of the V-JEPA 2 predictor to internally simulate a spread of potential subsequent strikes. It scores every imagined motion primarily based on how shut it will get to the purpose, executes the top-rated motion, and repeats the method till the duty is full.

Utilizing this technique, the mannequin achieved success charges between 65% and 80% on pick-and-place duties with unfamiliar objects in new settings.

Actual-world affect of bodily reasoning

This capability to plan and act in novel conditions has direct implications for enterprise operations. In logistics and manufacturing, it permits for extra adaptable robots that may deal with variations in merchandise and warehouse layouts with out in depth reprogramming. This may be particularly helpful as corporations are exploring the deployment of humanoid robots in factories and meeting traces.

The identical world mannequin can energy extremely sensible digital twins, permitting corporations to simulate new processes or prepare different AIs in a bodily correct digital surroundings. In industrial settings, a mannequin might monitor video feeds of equipment and, primarily based on its discovered understanding of physics, predict issues of safety and failures earlier than they occur.

This analysis is a key step towards what Meta calls “superior machine intelligence (AMI),” the place AI programs can “study in regards to the world as people do, plan methods to execute unfamiliar duties, and effectively adapt to the ever-changing world round us.”

Meta has launched the mannequin and its coaching code and hopes to “construct a broad group round this analysis, driving progress towards our final purpose of growing world fashions that may rework the best way AI interacts with the bodily world.”

What it means for enterprise technical decision-makers

V-JEPA 2 strikes robotics nearer to the software-defined mannequin that cloud groups already acknowledge: pre-train as soon as, deploy wherever. As a result of the mannequin learns normal physics from public video and solely wants just a few dozen hours of task-specific footage, enterprises can slash the data-collection cycle that sometimes drags down pilot tasks. In sensible phrases, you’ll be able to prototype a pick-and-place robotic on an reasonably priced desktop arm, then roll the identical coverage onto an industrial rig on the manufacturing unit ground with out gathering 1000’s of contemporary samples or writing customized movement scripts.

Decrease coaching overhead additionally reshapes the associated fee equation. At 1.2 billion parameters, V-JEPA 2 matches comfortably on a single high-end GPU, and its summary prediction targets scale back inference load additional. That lets groups run closed-loop management on-prem or on the edge, avoiding cloud latency and the compliance complications that include streaming video exterior the plant. Finances that when went to large compute clusters can fund additional sensors, redundancy, or sooner iteration cycles as an alternative.

Source link